Let’s talk about this amazing book, “Thinking in Systems” by Donella Meadows. It’s one of those rare gems that can change the way you see the world, no matter what you do for a living. The book is all about stepping back, looking at the big picture, and understanding how systems work before you try to change them.

Donella Meadows, who wrote the book passed away in 2001. But her ideas are more relevant than ever. She teaches us how to analyze and solve problems at every level, from the smallest issues to the biggest challenges facing our world.

What did I get out of it?

Well, first of all, systems thinking is a powerful mental model. It can help us tackle all kinds of problems, from issues as big as environmental issues to political, social, and economic challenges to matters in our personal and professional lives. The key is to understand that systems, whether they’re big or small, often behave in similar ways. And if we can figure out those patterns, we have a much better chance of making real, lasting change.

What’s really remarkable about systems thinking is that it goes beyond any single field or culture. When it’s done right, it can even help us understand history in a new way.

To really get a grip on the world around us, we need to recognize the systems that shape it. And to do that, we have to understand how those systems work. That means we can’t just stick to one narrow way of thinking. We need to be open to ideas from different disciplines and perspectives.

Here are some of the key things I learned from the book:

What are Systems?

A system is basically a bunch of connected parts that work together for a specific purpose. Systems can be self-organizing, self-repairing (up to a point), and resilient. Many of them can even evolve and adapt over time.

There are three things in a system:

- Components and elements.

- Relationship between components and elements.

- Purpose of the system.

It’s not just physical stuff that makes up a system. Intangible things like school pride or company culture are part of systems too.

If you want to know what a system’s real purpose is, don’t just listen to what people say. Watch how the system actually behaves over time. That will tell you a lot more.

A system is like a puzzle where all the pieces (elements) fit together in a specific way (interconnections) to create a complete picture (function/purpose). Just like a puzzle, a system can be made up of different parts that work together to make something happen.

Imagine a school as a system. It has many parts, like the students, teachers, classrooms, and even the school spirit (intangibles). All these parts are connected and organized in a way that helps the school function properly.

Systems can organize themselves, fix their own problems (to a certain extent), bounce back when things go wrong (resilient), and even change and grow over time (evolutionary/adaptive).

To really understand what a system is meant to do, it’s best to observe it in action for a while. Watch how it behaves and performs over time. Don’t just listen to what people say the system is supposed to do (rhetoric and stated goals).

One of the most important jobs of almost every system is to keep itself going (perpetuation). Like the human body needs food, oxygen, water and sleep to keep alive.

When a systems thinker encounters a problem, the first thing he does is look for data, item graphs, the history of the system. That’s because long term behavior provides clues to the underlying system structure. And structure is the key to understanding not just what is happening but why.

When we talk about how a system behaves, we’re looking at how it performs over time. It might grow, slow down (stagnate), get worse (decline), go up and down (oscillate), act in a random way, or change and develop (evolve).

Systems work well because of three main qualities: resilience, self-organization, and hierarchy.

Resilience

Resilience is like a rubber band. It’s the ability of a system to bounce back when things change or go wrong.

- A system stays resilient through feedback loops. These loops work like a thermostat, helping the system adjust and get back to normal at different speeds and in different ways.

- Sometimes, there are feedback loops that can fix other broken feedback loops. This is like having a backup plan for your backup plan (meta-resilience).

- Even better, some systems have feedback loops that can learn, create, and improve over time. These systems are called self-organizing.

- A resilient system is like a big trampoline. It has a lot of room to bounce around, but if it gets too close to the edge, it will gently bounce back to safety. If a system loses resilience, it’s like the trampoline shrinking, making it easier to fall off.

- Resilient systems are often dynamic, meaning they can change and adapt. Static systems that don’t change can become brittle and break more easily.

Self-Organization

Self-organization is when a system can arrange and manage itself without anyone telling it what to do. This leads to complexity, diversity, and sometimes unpredictability.

- Just like resilience, self-organization is often given up for short-term gains in productivity. But this can make the whole system more fragile in the long run.

- Simple rules can lead to complex and unique outcomes through self-organization. Birds flying in groups follow a few basic rules like “stay close to the group” and “avoid bumping into your neighbor.” These simple actions result in beautiful, complex swirling patterns in the sky.

Hierarchy

A hierarchy is an arrangement of systems and subsystems, like a pyramid.

There are no separate systems. The world is a continuum. Where to draw a boundary around a system depends on the purpose of the discussion.

- Complex systems can evolve from simple systems only if there are stable steps in between. These complex systems often form hierarchies, which is why we see so many hierarchies in nature.

- Hierarchies are great because they make systems stable and resilient. They also help by reducing the amount of information each part of the system needs to deal with.

- In a hierarchy, the relationships within each subsystem (level) are stronger than the relationships between subsystems. Everything is still connected, but some connections are stronger than others. If designed well, this minimizes delays in feedback and prevents any level from being overwhelmed with information.

- Hierarchies can be studied by looking at each level separately.

- Hierarchies evolve from the bottom up. Their original purpose is to help the lower levels do their jobs better. When this is forgotten, the hierarchy can start to malfunction.

Stocks and Flows

The elements in a system are the easiest parts to understand because most of the time they are tangible. So, we often work on those to improve the output of the system. But we have to observe a system to understand its behavior. However, the most difficult part of a system is to see the function or purpose of it.

The behavior of a system cannot be known just by knowing the elements of which the system is made.

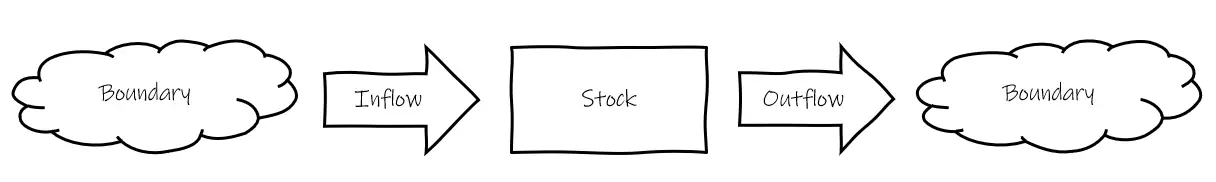

The behavior of a system breaks down into stocks and flows. Stocks are the elements of a system that can be accounted for at any given time or things that you can see or touch. For instance, water in a bathtub, books in a store or money in a bank, etc.

Things that change the elements or parts are called “flows.” Think of water flowing in and out of a bathtub. This changes how much water is in the tub (the stock).

Sometimes, flows aren’t balanced. The water might be coming in faster than it goes out. This changes the stock over time.

Feedback Loops

The gap, discrepancy, between current and ideal state drives feedback loops and the bigger the gap the stronger the feedback loop.

Our world is full of feedback loops, which are cycles of cause and effect that keep repeating in a system. A feedback loop happens when a stock (a quantity or level of something) influences decisions, rules, or actions, which then affect the flows (the rates of change) that modify the original stock.

There are two main types of feedback loops:

- Reinforcing (or positive) feedback loops: These amplify or reinforce changes in a system, leading to growth or decline.

- Balancing (or negative) feedback loops: These counteract or resist changes in a system, helping to maintain stability or equilibrium.

One important thing to remember is that feedback loops can only influence future behavior, not the current state of the system, because the effects take time to accumulate.

Here are couple of examples of feedback loops:

Drift to Low Performance (Reinforcing Feedback Loop) Drift to low performance is a common problem in systems where performance standards are influenced by past performance, especially if there’s a bias towards noticing negative outcomes more than positive ones.

Here’s how it works:

- A company has a certain level of performance (stock).

- If the company performs poorly, decision-makers may lower their expectations and set lower standards for the future (decision/action).

- Lower standards make it more likely that performance will continue to decline (flow), further reducing the performance level (stock).

- This creates a reinforcing feedback loop, where lower performance leads to lower standards, which leads to even lower performance over time.

To counteract this, it’s important to keep performance standards consistent and base them on the best outcomes rather than the worst. This way, the same feedback loop structure can be used to create a virtuous cycle of high performance.

Success to the Successful (Reinforcing Feedback Loop) Success to the successful is another example of a reinforcing feedback loop, where initial advantages lead to future success, creating a “rich get richer” dynamic.

Here’s an example:

- Two companies, A and B, compete in the same market. Company A has slightly more resources (stock) than Company B.

- With more resources, Company A can invest in better products, marketing, and talent (decision/action).

- These investments help Company A attract more customers and revenue (flow), increasing its resource advantage (stock) over Company B.

- As Company A’s resources grow, it can invest even more, while Company B falls further behind, creating a reinforcing feedback loop.

To counteract this, there are several strategies:

- Diversification: Encourage more participants in the system to reduce the impact of any one winner.

- Limiting dominance: Set rules to prevent winners from becoming too powerful, like anti-trust laws.

- Leveling the playing field: Create policies that give all participants a fair chance, regardless of past success.

- Ensuring fair competition: Design the system so that the rules don’t inherently favor previous winners.

Understanding feedback loops and how they influence system behavior is crucial for managing complex systems effectively. By identifying reinforcing and balancing feedback loops, we can find ways to intervene and shape the system towards desired outcomes.

Bounded Rationality

Bounded rationality means that people make quite reasonable decisions based on the information they have. But they don’t have perfect information, especially about more distant parts of the system.

Bounded rationality is the idea that people make decisions based on the limited information they have, which can lead to unintended consequences.

Imagine you’re planning a road trip. You look at a map and choose the route that seems the fastest based on the information you have. However, you don’t know about construction work or heavy traffic on that route. As a result, your trip takes much longer than expected.

This is an example of bounded rationality. You made a reasonable decision based on the information available to you, but because that information was incomplete, the outcome was not what you intended.

Bounded rationality can cause things to happen much faster or slower than people expect, or cause systems to suddenly exhibit new behaviors that no one has seen before. This is because the limited information people have can make it hard to anticipate how the system as a whole will behave.

How to Deal With It

- Get the big picture: Don’t just focus on one tiny part of a problem, zoom out to see how it connects to everything else.

- Share information better: Make sure the right people know what’s going on so they can make better decisions.

- Make the system itself stronger: Think about how the rules and goals of the system affect what people decide to do. Small changes here can make a big difference!

However, it’s also important to remember that our mental models and representations of the world are always incomplete. We can never have perfect information about a complex system.

Tragedy of the commons

The tragedy of the commons occurs when individuals acting in their own self-interest deplete a shared resource, even though it’s not in anyone’s long-term interest for this to happen.

A classic example is a shared pasture where many herders graze their cattle. Each herder has an incentive to add more cattle to the pasture because they reap the full benefit of each additional cow while the cost of overgrazing is shared among all the herders. Over time, this leads to overgrazing and the depletion of the pasture, which harms everyone.

The key problem in the tragedy of the commons is that the feedback signals are either invisible or too delayed. The negative consequences of individual actions are not immediately apparent or felt directly by the individuals causing them.

How to fix it:

- Teach people: Explain how everyone’s actions add up to harm the shared thing.

- Divide it up: Give each person their own section, then they’re motivated to take care of it.

- Make rules: Set limits on how much of the shared resource people can use.

Understanding and Improving Our Mental Models

Imagine you have a friend who lives in another country. You’ve never visited them, but you have an idea in your head about what their life is like based on what they’ve told you, photos they’ve shared, and what you’ve seen on TV or the internet. This idea you have is like a mental model—it’s a simplified version of reality that helps you understand and make sense of something.

We all have mental models about the world around us. They shape how we think, make decisions, and act. But just like your idea of your friend’s life, our mental models are not perfect. They’re always simplifications and can sometimes be wrong or missing important details.

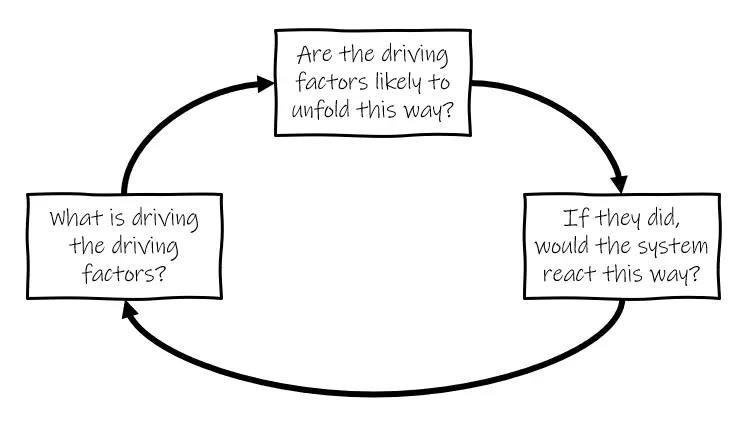

To figure out if a mental model is useful, we can ask ourselves three questions:

- Are the key parts of the model likely to happen as it suggests?

- If those parts did happen that way, would the situation play out as the model predicts?

- What deeper reasons are causing the key parts of the model to happen?

A model’s usefulness doesn’t depend on whether its predictions are 100% realistic (because we can never be certain about the future). What matters more is whether the model’s behavior matches how things would happen in the real world under similar conditions.

It’s important to remember that everything we think we know about the world is a kind of model. The words we use, the maps we draw, the books we read, the equations we write, and the thoughts in our heads are all models. They’re helpful, but they’re not exactly the same as reality.

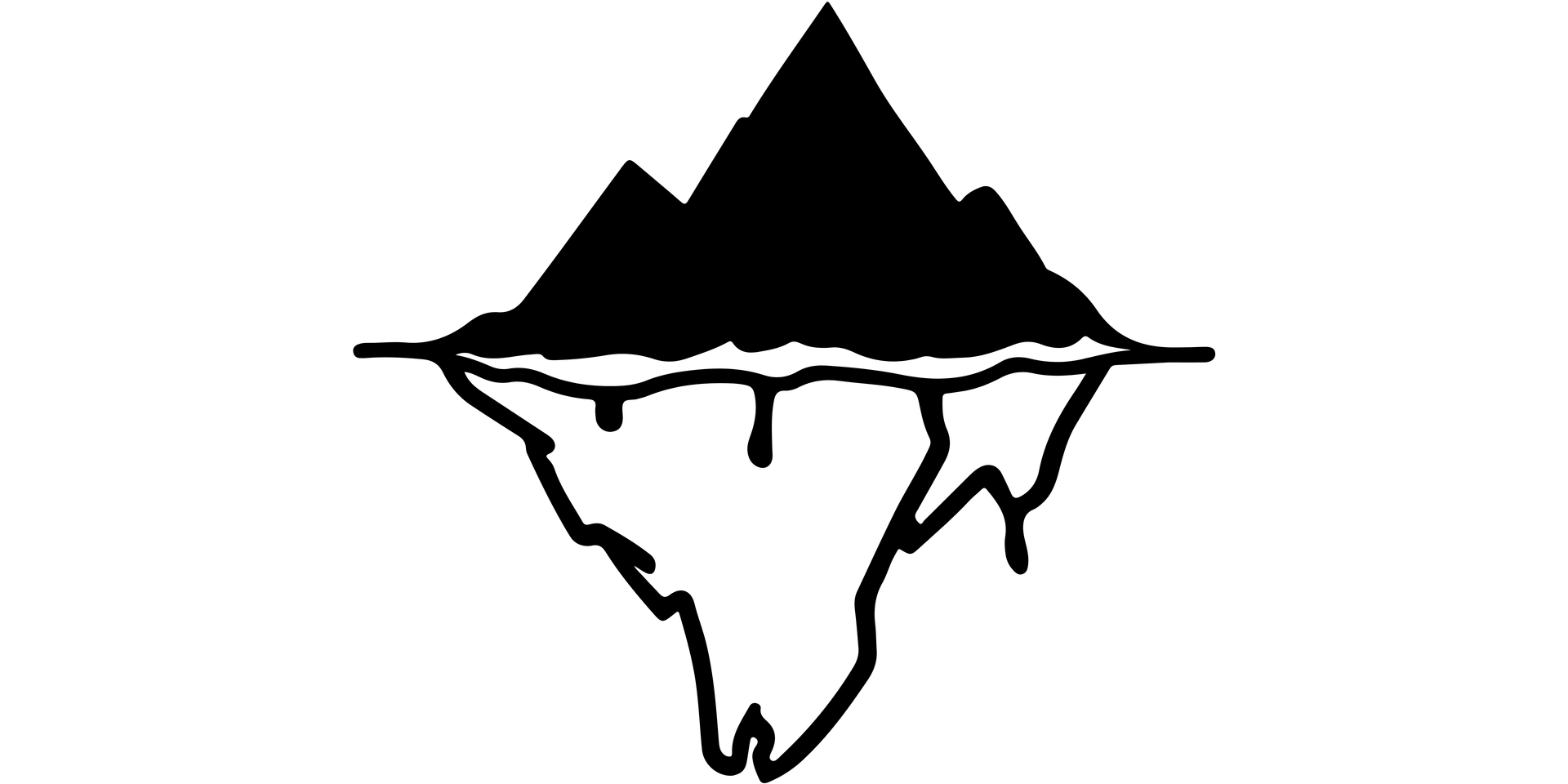

Most of the time, our mental models match up pretty well with the real world. That’s why they work for us. But sometimes, the world can trick us by showing us a series of events that grab our attention. Like the tip of an iceberg sticking out of the water, these events are the most obvious part of a bigger, more complex situation. But they’re not always the most important part. By looking deeper and seeing how events build up into patterns over time, we can understand things better and be less surprised when something unexpected happens.

Models that focus on patterns of behavior over time are more helpful than those that just react to single events. But even behavior-based models have their limits. They often focus too much on how fast things are changing and not enough on how things build up over time. Also, just because two things are changing in a system doesn’t mean they’ll always have a steady, predictable relationship.

To make our mental models better, we need to put them to the test. By carefully checking our ideas, we can spot uncertainties, fix mistakes, and come up with models that are more flexible and adaptable. This kind of mental flexibility—the willingness to redraw boundaries, notice when a situation has shifted into a new phase, and see how to reshape the structure of a system—is super important in a world where things are always changing.

Limiting Factors and Growth

At any given time, the input that is most important to a system is the one that is most limiting. Insight comes not only from recognizing which factor is limiting, but from seeing that growth itself depletes or enhances limits and therefore changes what is limiting.

In any system, the most important input is often the one that is most scarce or limited. The system’s growth and performance depend on having enough of this critical resource. As the system grows, it can change its own limits. Growth can deplete limited resources, making the limits more constraining. However, growth can also enhance a system’s ability to acquire or use limited resources, effectively expanding its limits.

Nonlinear Relationships and Shifting System Behavior

You can’t navigate well in an interconnected, feedback-dominated world unless you take your eyes off short-term events and look for long-term behavior and structure; unless you are aware of false boundaries and bounded rationality; unless you take into account limiting factors, nonlinearities and delays. You are likely to mistreat, mis-design, or misread systems if you don’t respect their properties of resilience, self-organization and hierarchy.

Many relationships in systems are nonlinear, meaning that a change in one part of the system doesn’t always cause a proportional change in another part. Small changes can have big effects, and vice versa. This can be surprising because we often expect things to be linear.

Nonlinear relationships are important because they can change the balance of feedback loops in a system. Feedback loops are cycles where a change in one part of the system leads to further changes that either reinforce or counteract the original change. When nonlinear relationships make certain feedback loops stronger or weaker, the whole system can shift into a different pattern of behavior.

Leverage Points

Leverage points are places within a system where a small change can lead to a large shift in the system’s behavior. These points are often not obvious and can be counterintuitive. Here are some examples of leverage points, ordered from least to most effective:

- Numbers: Constants and parameters, such as subsidies, taxes, and standards, are the least effective leverage points. Changing these numbers rarely alters the fundamental behavior of the system.

- Buffers: These are stocks that help stabilize the system relative to their flows. Increasing the size of a buffer can stabilize the system, but if the buffer becomes too large, the system can become inflexible.

- Stock and Flow Structures: The physical layout and interconnections of stocks and flows can significantly impact the system’s behavior. Rebuilding the system is often the only way to fix a poorly designed structure. Imagine a city with a lot of traffic jams. Rearranging the roads and highways (the structure) could help traffic flow better, but it’s a big job to rebuild everything.

Systems can’t be controlled but they can be designed and redesigned.

- Delays: The time delays between system changes and feedback responses are critical. Longer delays relative to the rate of change can cause instability and oscillations. Adjusting the rate of change is often easier than changing the delay length.

Slowing the growth is usually a more powerful leverage point in systems than strengthening balancing loops and far more preferable than letting the reinforcing loop run.

- Balancing Feedback Loops: These are stabilizing loops that counteract change. Weakening or removing these loops, especially “emergency” response mechanisms, can reduce the system’s resilience and ability to survive under varying conditions.

One of the big mistakes is removing these “emergency” response mechanisms because they aren’t often used, and they appear to be costly. May be no effect in the short-term but in the long-term you drastically reduce the range of conditions over which the system can survive.

- Reinforcing Feedback Loops: These are loops that amplify change and can lead to growth, explosion, erosion, or collapse. Slowing down reinforcing loops is usually more effective than strengthening balancing loops.

- Information Flows: The structure of who has access to what information can significantly influence the system’s behavior. If people don’t have the right information, they might make bad decisions. It’s like a company where the sales team doesn’t talk to the production team: they might sell more than the factory can make, causing problems.

- Rules: Incentives, punishments, and constraints are powerful leverage points. Those who have the power to change the rules can significantly influence the system.

If you want to understand the deepest malfunctions of systems, pay attention to the rules and who has power over them.

- Self-Organization: The ability of a system to add, change, or evolve its own structure is a form of resilience. It’s like how a sports team can adjust its strategy during a game without the coach telling them what to do. Systems that can self-organize can adapt to and survive many changes.

- Goals: The purpose or function of the system is a high-level leverage point. All other aspects of the system will be shaped to align with the overall goal.

- Paradigms: The mindset or worldview from which the system arises is the most fundamental leverage point. Changing paradigms is difficult but can completely transform the system. It’s like the shift from believing the Earth is the center of the universe to realizing the Earth revolves around the Sun: it changes everything about how we understand the solar system.

- Transcending Paradigms: The ability to remain unattached to any particular paradigm and maintain flexibility of perspective is a profound source of leverage when dealing with complex systems.

Who Should Read It?

“Thinking in Systems” is a book that can be challenging to read, as it’s very academic. However, it’s a book that offers insights for anyone who wants to understand the world around them better.

If you’re someone who is curious about how different fields of study connect and influence each other, this book is a great starting point. “Thinking in Systems” helps us see the importance of looking at problems and situations from multiple perspectives, not just focusing on one narrow view.

Reading this book can be a humbling experience. It shows us how complex the systems around us are, from our own bodies to the global economy. It also reminds us that our understanding of these systems is limited and that we can’t always control them as much as we might like.

The book might not be a light, easy read, but it’s worth the effort. In fact, it’s the kind of book that you might need to read more than once to fully appreciate. I am sure that every time I will re-read it, I’ll likely discover new insights and connections that I didn’t see before.

So, who should read “Thinking in Systems”? Really, anyone who wants to be a clearer, more holistic thinker. Whether you’re a student, a professional, or just someone who’s curious about the world, this book can help you see things in a new light.

It’s especially valuable for people who want to tackle big, complex problems. Whether you’re interested in improving your own health, making your business more successful, or addressing global challenges like climate change, understanding systems thinking is a powerful tool.